Hi! I’m Ziqi Liu 刘子琦

I’m a first-year M.phil student at the UbiquitousX Lab at Hong Kong University of Science and Technology, supervised by Qijia Shao. Prior to HKUST, I got my B.Eng degree at Tsinghua University, supervised by Yuntao Wang and Haipeng Mi.

My research focuses on the synergy between AI-driven sensing and adaptive intervention. By integrating hardware and software innovations across ubiquitous devices and wearables, I design and develop closed-loop systems that tightly couple the implicit, continuous sensing of behavioral and physiological states with just-in-time, context-aware interventions.

I have gained valuable research experience in multiple esteemed labs, inlucding the Future Lab and Pervasive HCI Group at Tsinghua University.

Research Interests

Pervasive Sensing

- Everyday devices — repurposing built-in sensors to capture rich signals during natural interaction.

- Custom wearables — designing devices that unobtrusively collect modalities beyond commodity hardware.

Personalized State Inference

- Interaction intent — recognizing implicit goals and attention patterns for interaction applications.

- Health & cognitive states — inferring physiological and psychological indicators for continuous health monitoring.

Just-in-Time Intervention

News

| Dec 08, 2025 | Our project “Hand Motion Prediction for Adaptive Smartphone UI with Zero-permission Sensors” has won the Best Award for UROP at HKUST! Congrulations to Ziyi and the team! 🎉 |

|---|---|

| Nov 08, 2025 | Spent a wonderful week at Mobicom 2025 in Hong Kong as a student volunteer! 📸 |

| Aug 15, 2025 | Arrived in Hong Kong and started my M.Phil at HKUST! 🌊 |

| Jun 22, 2025 | I have successfully graduated from Tsinghua University with a Bachelor’s degree in Engineering!🎓 |

| Jun 05, 2025 | I passed my undergraduate thesis defense!🗣️ |

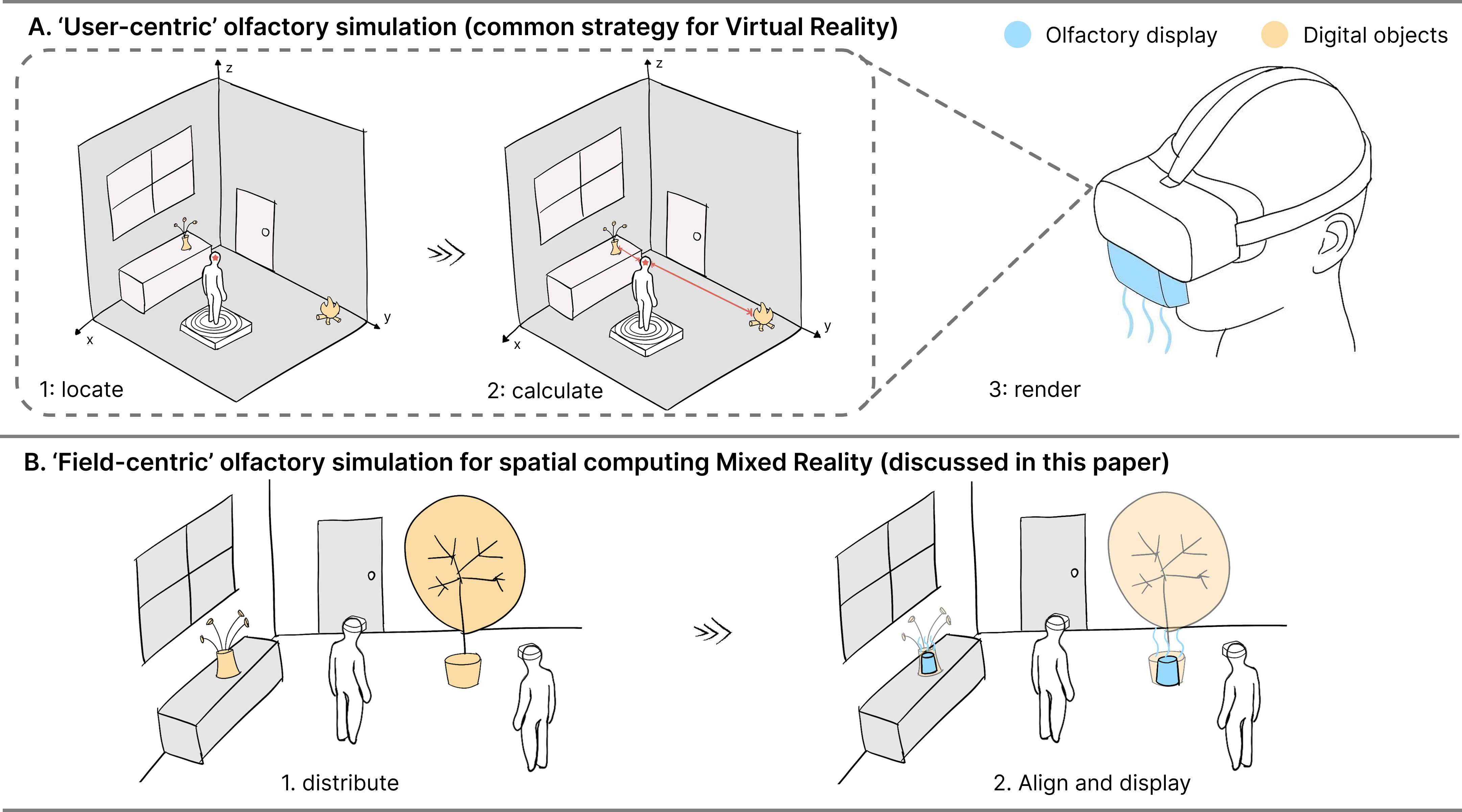

| Feb 23, 2025 | Our paper “AroMR: Decentralizing Olfactory Displays into the Environment for Olfactory-Augmented Experiences in Mixed Reality” has been accepted to CHI 2025 Late Breaking Work! 🎉 |

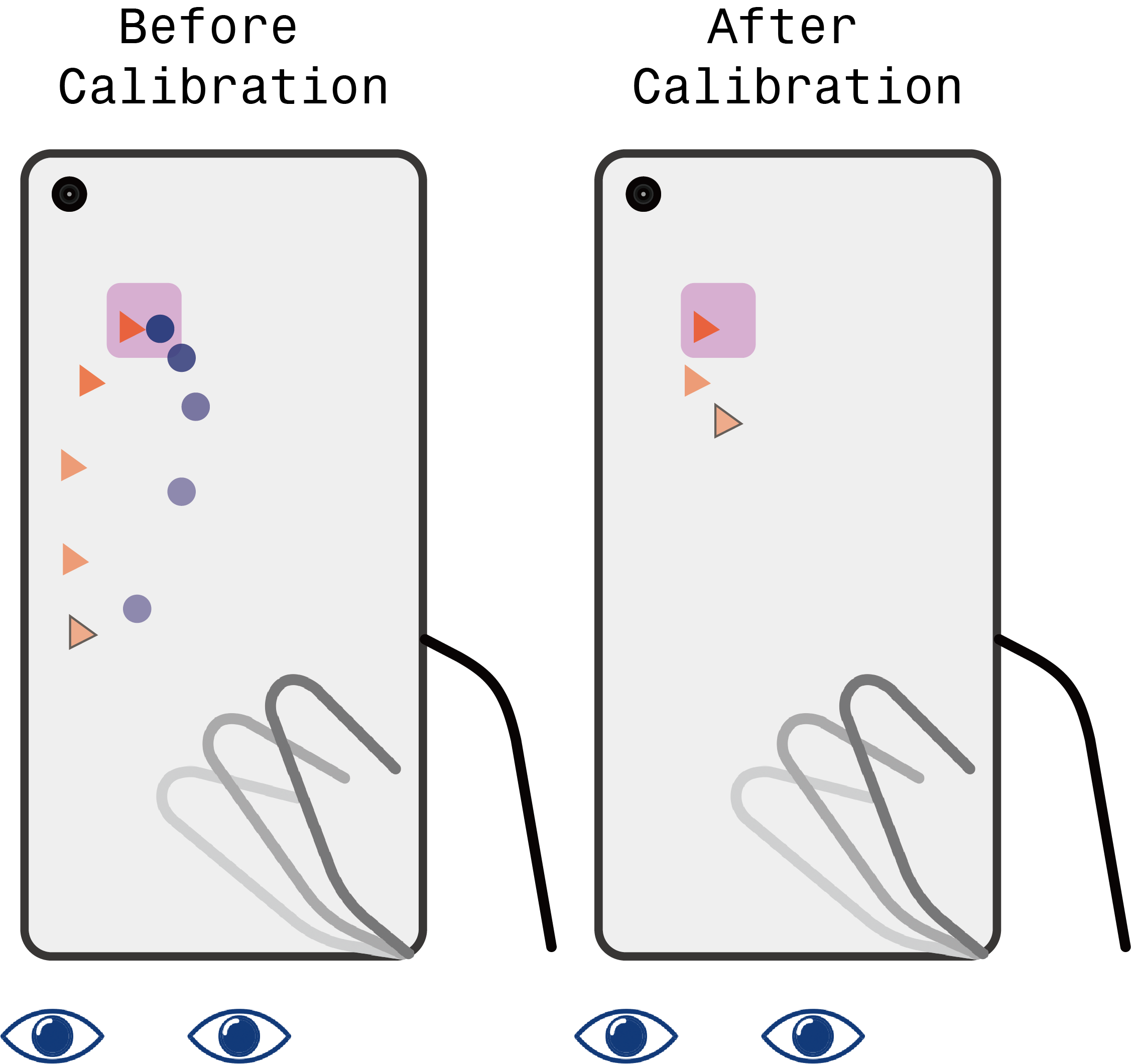

| Jan 17, 2025 | Our paper “Enhancing Smartphone Eye Tracking with Cursor-Based Interactive Implicit Calibration” has been accepted to CHI 2025! 🎉 |

| Oct 11, 2024 | Established a new wedsite for myself! 🥳 |